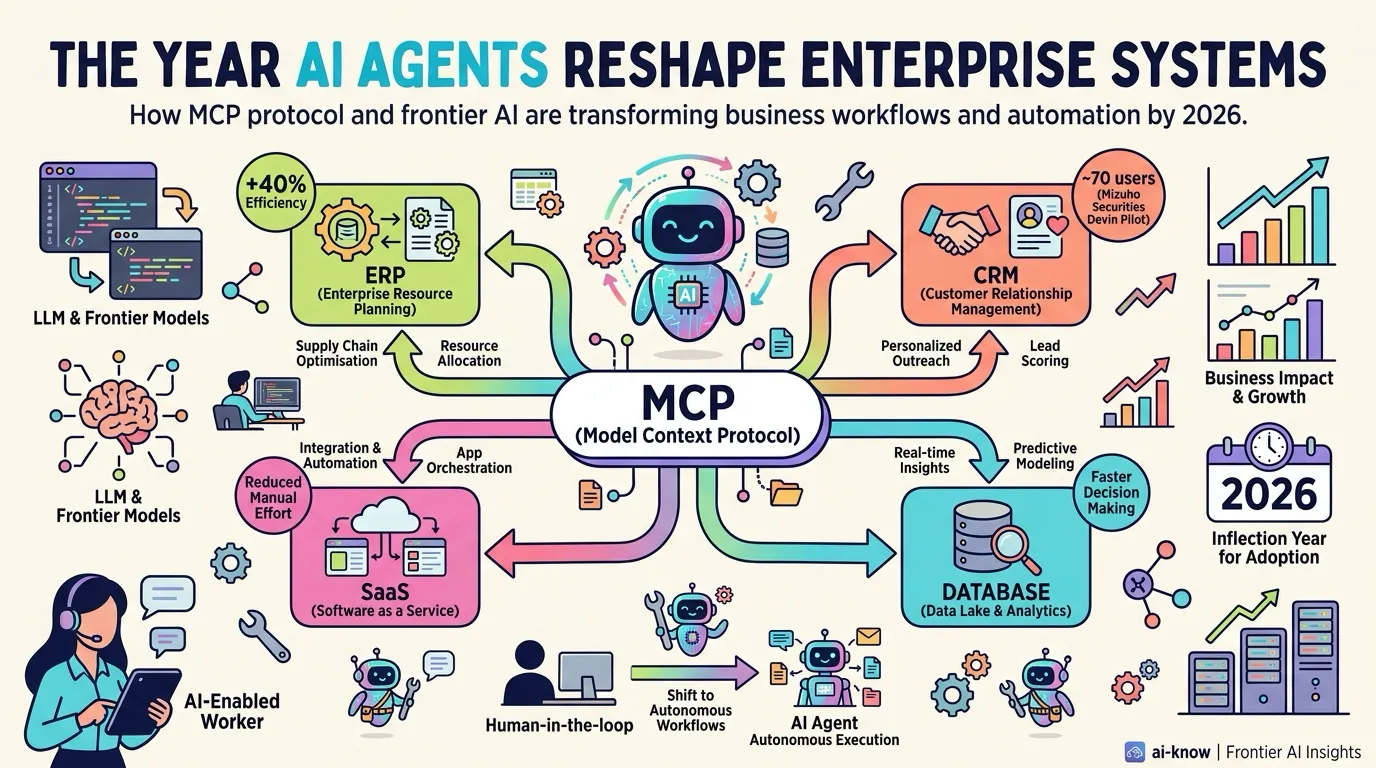

Human-in-the-Loop (HITL)(Human-in-the-Loop)

Human-in-the-Loop (HITL) is a design pattern that embeds human approval or correction steps into an AI’s decision-making process. Pioneered by Western AI safety research communities, it offers a structured way to balance full automation against full human control by tiering decisions by risk.

A common implementation pattern uses three tiers: low-risk repetitive work runs fully automated, high-risk decisions (money, contracts, personnel) require HITL approval, and the middle ground operates under conditional approval (human oversight only when thresholds are exceeded). For enterprise AI agent deployments, getting this design right often determines success or stall.